Daniel Fernandez posts a nice summary of some of the problems algorithmic traders have experienced over the past few years. If you’ve been wondering why your expert advisor isn’t making money, まあ, you’re not alone.

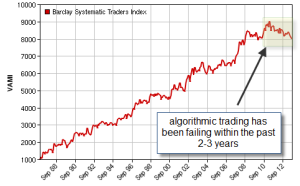

Daniel points out the terrible performance of the Barclays systematic trading index and its nearly three years of continuous losses. Even the pros are losing money consistently.

Tough Times with Algorithmic Trading

Key sections:

It is no secret that algorithmic trading had some “golden years” between 2008-2011. Through this period – most notably due to the high directional volatility of the financial crisis – systems based on a wide variety of market characteristics were able to obtain high amounts of profit, with an almost completely negative correlation with equity markets. Among the high-performers found during this period, trend followers were perhaps the most impressive, with some systems achieving returns of more than 100% of capital within this period, with little drawdown whatsoever. During these years everyone trading algorithms was making a killing. その後、, change happened.

The answer seems to be simple and at the same time incredibly complex: fundamental influence and uncertainty. Algorithmic trading systems are all designed with the idea that some historical assumption will continue to be true in the future. This assumption can be that price tends to break at a certain hour, that momentum created in one direction leads to continuations, that two instruments are co-integrated, など. When these assumptions break, the algorithms fail because they have no way to know that under current market conditions their assumptions are no longer valid. This “breaking up” of algorithms means that we usually need to take loses to realize that something has changed – to remove or modify our strategy – and this makes us invariably less reactive than human traders. The strength of algorithmic trading, it’s high capacity to exploit structural characteristics, becomes its weakness when the underlying structure changes.